Why Most AI Coding Workflows Fail — and How Mine Cut 2 Weeks of Work to 2 Days

Lessons from building production features with AI, large codebases, and real constraints.

Strong suggestion: don’t copy my workflow — read through the failures, mistakes, and learnings, and use them to craft a workflow that works for you.

If you want to jump straight to my current workflow, go here →

👉 Iteration 7: My Current Workflow (What Finally Worked)

I’ve been thinking about writing this for a while.

I’m still learning, but over the past year, I’ve been coding with AI almost every day — both in personal projects and in production systems at work. I’m not claiming this is the best way to use AI, but this is the workflow that helped me compress what usually takes three weeks into two days.

This blog isn’t about a single “aha” moment.

It’s about iterations — where things worked, where they broke, and what each failure taught me.

Iteration 1: AI Felt Like Magic

When I first started coding with AI, I was honestly impressed.

Things that used to take real mental effort were getting done effortlessly:

Solving a LeetCode problem

Creating Simple UI with React and Stylings

Writing a simple API to CRUD a model

AI handled all of this extremely well.

At this stage, I was mostly using Cursor for personal projects. These projects were small, the codebase was tiny, and the AI had very little context to reason about.

Naturally, I assumed:

“If this works so well for personal projects, it should work even better for office projects.”

That assumption didn’t last long.

Iteration 2: Production Codebases Change Everything

Around 6–8 months ago, my company gave me a paid Cursor license.

I started using it on our actual product codebase.

The difference was immediate.

Same prompts.

Same workflow.

Completely different results.

In personal projects, the AI was dealing with hundreds or a few thousand lines of code.

In production, it had to reason about tens of thousands of lines, multiple modules, and years of architectural decisions.

That’s when things started breaking.

Iteration 3: Ask → Plan → Build (Worked… Until It Didn’t)

To regain control, I introduced structure.

Before implementing any feature, I started doing this:

Switch Cursor to Ask mode

Ask it to understand specific folders or modules

Explain the feature I wanted to build

Ask it to give me a plan

Switch to Agent mode and ask it to implement the plan

This was before Cursor even had a dedicated Plan mode.

And honestly — it worked really well.

I completed multiple backend-heavy features using this approach, and I was genuinely surprised by how well the AI understood the code and executed changes.

Until I hit a full-stack feature.

Iteration 4: Full-Stack Complexity Broke the Workflow

The next feature involved everything:

Frontend

Backend

Queues and background jobs

Socket events

Real-time UI updates

State management

The number of moving parts exploded.

I followed the same workflow.

The output was bad.

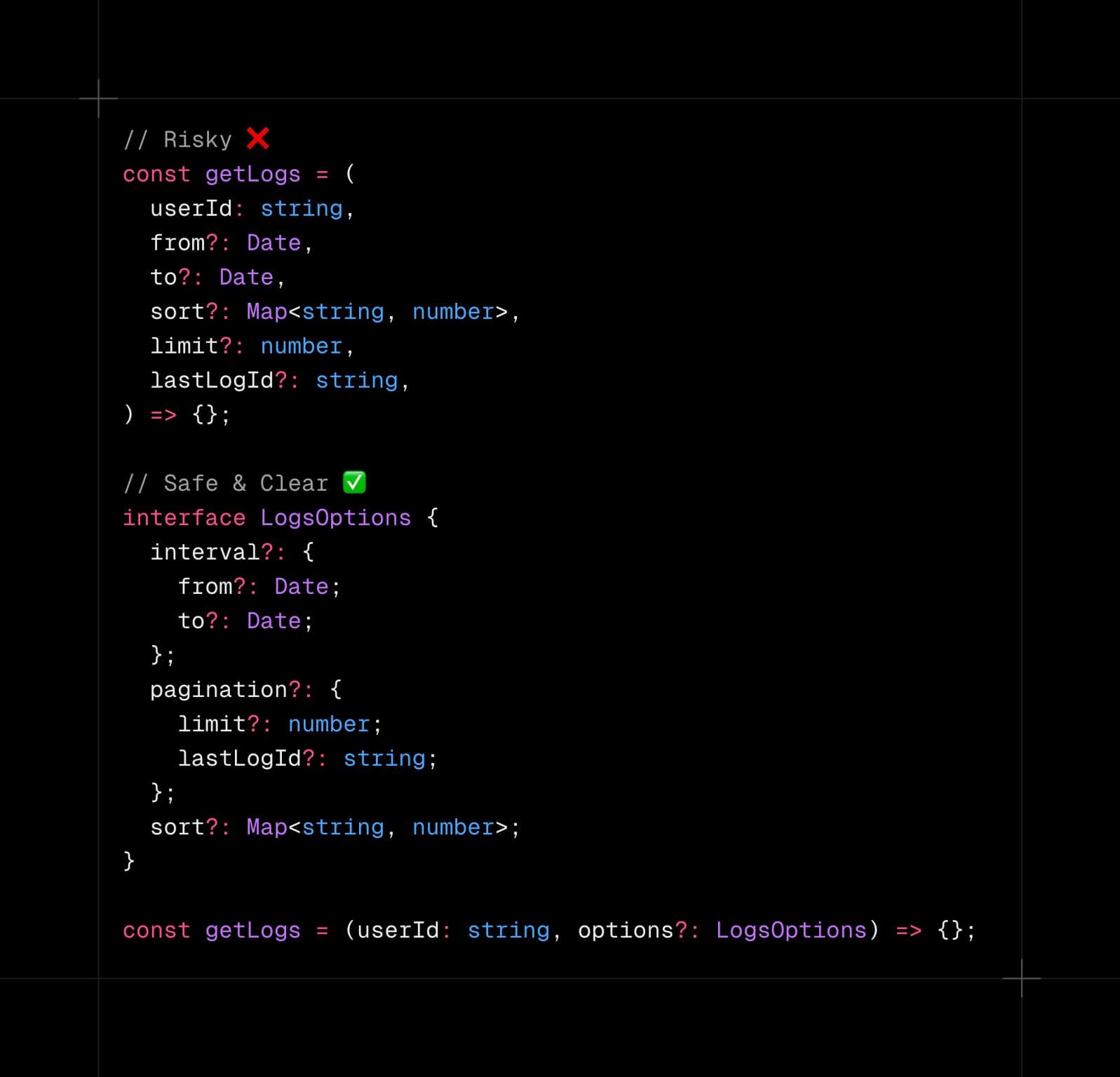

TypeScript types replaced with

anyandunknownMethod signature mismatches

Code written just to “make it work”

Hidden bugs everywhere

That’s when I realized something important:

The workflow didn’t change.

The task complexity did.

Iteration 5: Treating AI Like a Fresh Developer

I asked myself a simple question:

“If I were giving this task to a fresh developer, how would I do it?”

I wouldn’t explain everything at once.

So I split the feature into independent chunks:

Backend

Frontend

The backend agent didn’t know about the frontend.

The frontend agent only knew the API contract.

For the backend, I gave very clear instructions:

Queue behaviour

API design

Event flow

Then I handed the API contract to the frontend.

This worked beautifully.

Until I tried spinning up a new microservice.

Iteration 6: Plan Mode Enters (And Still Fails at Scale)

Around this time, Cursor launched Plan mode, and naturally, I started using it heavily.

On paper, this felt like the missing piece.

The idea was simple:

Create a detailed plan in markdown

Let the agent refer to it instead of holding everything in memory

Execute step by step

For medium-sized features, this worked really well.

But then I hit a much bigger task.

This feature involved:

Creating a new microservice

Linking it with existing services

Implementing cutting edge tools

Adopting modern Node.js and TypeScript patterns

Handling cross-service communication

I brainstormed deeply with the AI and created a pixel-perfect plan.

The plan itself was solid.

The execution wasn’t.

anyeverywhereInterface mismatches

Build failures

Runtime issues

That’s when I finally understood the real problem:

Even with Plan mode, a single massive plan is still too much context.

Plan mode helped — but it didn’t remove the need to break the problem down further.

Why This Fails (Even for Humans)

Imagine your CTO hands you:

An 800–900 page tech requirement document, includes

Multiple microservices

Multiple protocols like Kafka or gRPC

Multiple external dependencies

…and asks you to implement everything in a single go.

You wouldn’t be productive.

That’s why we have:

Epics

User stories

Tasks

But when it comes to AI, we forget this and treat it like a supernatural entity.

It’s not.

It has limits.

Iteration 7: My Current Workflow (What Finally Worked)

This is the workflow that actually stuck.

Step 1: Start With High-Level Architecture

No function signatures.

No deep implementation details.

Just:

Models

Responsibilities

Clear system boundaries

Step 2: Let AI Propose Mid-Level Phases

I ask the AI to break the feature into phases.

Usually, I get something like:

8 phases

Each phase with 4–5 bullet points

Now the big feature is split into manageable chunks.

Step 3: Create a Separate Plan for Each Phase

Each phase gets its own plan:

Separate document

Less than ~500 lines

Focused and crisp

A 5,000-line plan is no better than no plan.

Step 4: Manual Review Is Mandatory

Before building anything, I review each plan:

Fix naming

Correct modeling flaws

Adjust structure

You cannot blindly trust AI.

You still own the architecture.

Step 5: Build Phase by Phase

Slow.

Deliberate.

Controlled.

Phase 1 → Build

Phase 2 → Build

…

Phase 8 → Build

This is where everything finally clicked.

The Result

The entire feature was completed in 2–3 days.

Without this workflow, it would have easily taken weeks.

The code was:

Clean

Strongly typed

No unnecessary

anyEasy to reason about and maintain

Final Thoughts

AI productivity gains are real — but only if you respect its limits.

Think of AI as:

A very fast fresh developer who needs clear, scoped, precise instructions

This is my 7th iteration of this workflow, and it’ll iterate as the complexity increases.

If you think I missed something, tell me.

If you’ve found a better approach, share it.

Let’s learn from each other and write better code with AI, not just more code.

Cheers!