I Reverse Engineered Claude's UI Widget — And It Changed How I Think About Building LLM Apps

👋 Hey there! I’m a Full Stack Developer with 3+ years of experience building top-notch web and mobile apps. I’m here to help you craft the best app for your product using tech stacks like MERN, Flutter, React Native, Django, and more. 🚀 Currently on a mission to build an app that ensures you never forget a crucial task (believe me, it’s a total game changer!). 🚀💡

So we've all seen Anthropic ship features at an incredible pace, right? And the easy assumption is — ah, they probably have Mythos, some model more powerful than what's publicly available, and they're using that internally to move fast.

But that's not the only reason. And honestly, it's not even the most interesting one.

About three months back, I started using Claude as my primary assistant for pretty much everything. And I noticed something that genuinely caught my attention.

When I ask Claude something simple, it responds in plain text. But when the answer is complex, or when there's a lot of information to show — it renders a UI right inside the Claude app. An interactive widget I can actually play with. Not just text. A real interface.

I started wondering — how are they doing this? 🤔

My First Guess Was Wrong

My initial assumption was that Anthropic had built a library of React components, given the LLM instructions on when and how to use each one, and when Claude responds, it generates a JSON payload that the frontend maps to those components.

That seemed reasonable to me.

I was completely wrong.

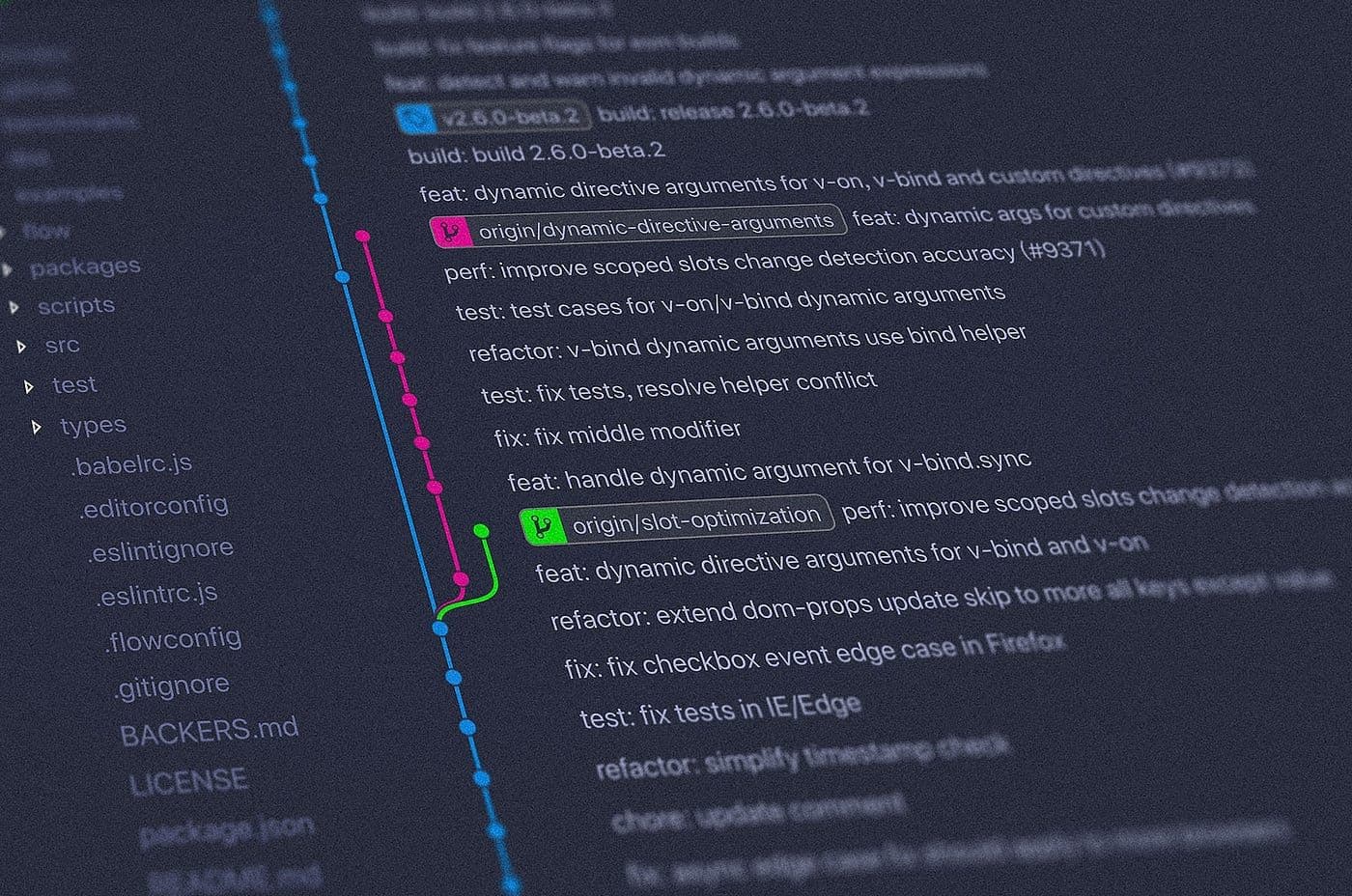

So I opened Claude on the web, pulled up the network tab, and inspected the actual response. I reverse engineered how Claude renders its UI.

What I found was surprising. 👀

What's Actually Happening

The response wasn't JSON. It wasn't a reference to any predefined component.

It was a plain HTML, CSS, and JavaScript file — with inline styles.

That's when it clicked. They're not using a component library to build the UI. They went a level below that. They provided Claude with a design system — the design principles, the basic styling rules, how a button should look and behave — and then asked Claude to generate HTML, CSS, and JavaScript on its own.

They take that single HTML file and render it in an iframe.

"They didn't build the UI. They taught Claude how to build it."

Think about what this means. LLMs write good code. Anthropic gave Claude a design system and said — generate the UI. And as the model gets smarter, the UI it generates gets better. Automatically. Without changing a single line of their own code. 🚀

Splitting the Hands from the Brain

I later came across a blog post from Anthropic that described this concept — splitting the hands from the brain.

The idea is this: most developers write prompts and instructions that are tightly coupled to a specific model. If a model doesn't do something well, you go in and patch the prompt. You hardcode workarounds. You over-instruct.

What Anthropic is doing instead is providing raw tools to the LLM and letting the model figure out how to use them.

"The instructions stay the same. The model just gets better at using them."

So if you're using Claude Sonnet 4.6, the UI it generates is solid. Move to Opus, it gets significantly better. Move to Mythos — it's on another level entirely. And Anthropic didn't have to touch their instructions. The model just got better at using the same tools.

That's the key insight. 💡

Why This Should Change How We Build LLM Apps

We have access to the same models Anthropic is using. But what are most of us doing? We're hardcoding logic into prompts. We're writing harness that's tightly coupled to a specific model's behavior. And the moment a smarter model ships, that harness becomes stale.

We should stop encoding specific instructions into prompts and start thinking about building better tools with clearer interfaces — tools that any LLM, today or two years from now, can pick up and use effectively.

"Stop writing instructions for the model. Start building tools for it."

It's Not as Simple as Writing a Good Prompt

I'll be honest — I used to think building LLM apps was straightforward. Give it a good prompt, tweak it when something breaks, move on.

That's not how it works.

Architecting an agent properly takes real thought. What Anthropic is doing is genuinely different from what most companies are doing right now. We're still treating AI like a rule-following system — developers trying to hardcode intelligence into a prompt instead of letting the model use its own.

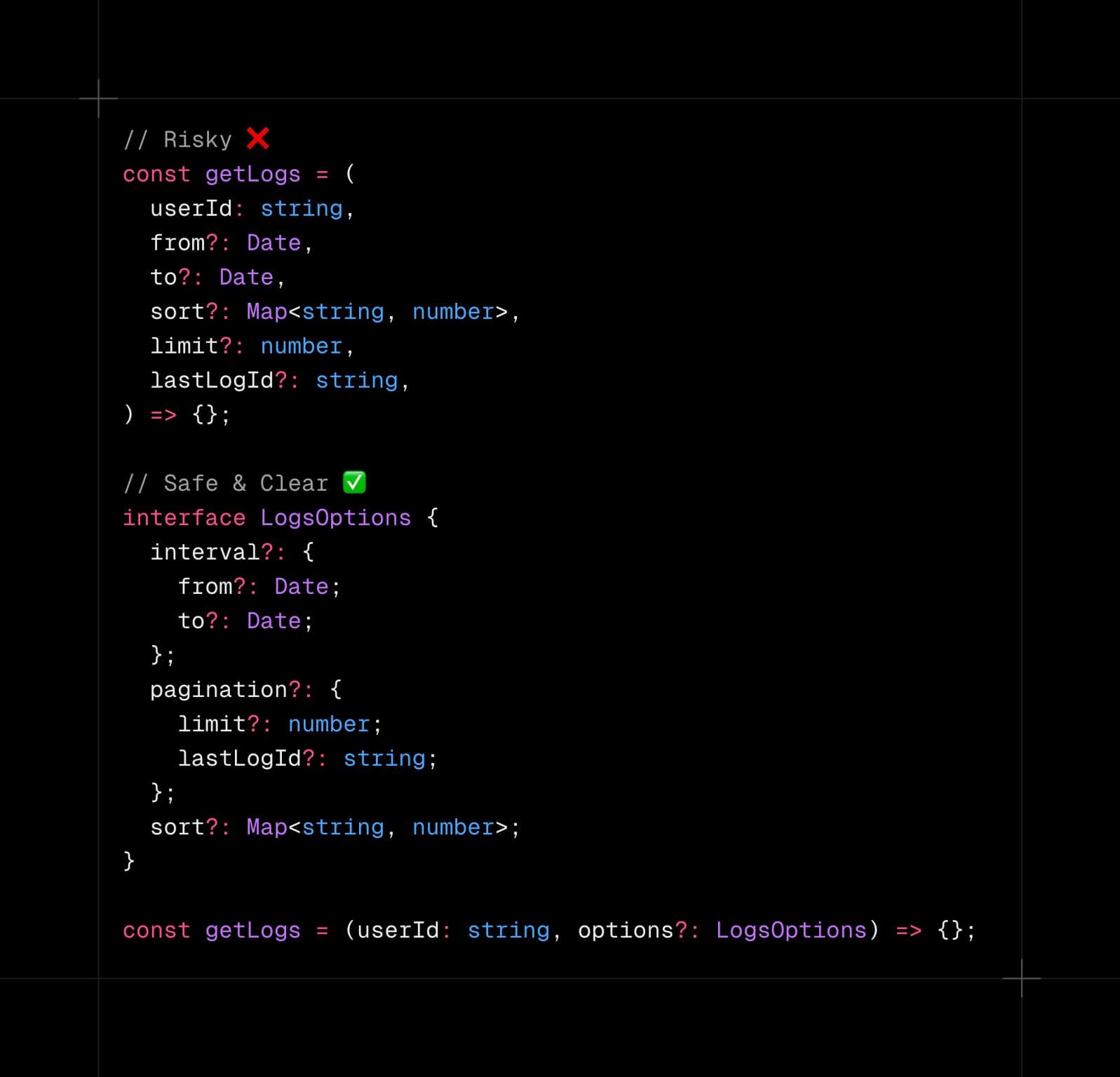

Here's a better way to think about it: imagine you're handed a fixed set of components and told to build something. No flexibility, no room to think. You just assemble what's given.

Now imagine instead someone hands you a design system — guidelines, principles, a foundation — and says, make it look great, adapt as needed. Suddenly there's room for judgment. For creativity. For the model to actually do what it's good at.

"Give a model components, and it assembles. Give it a design system, and it creates."

That's what Anthropic figured out. And I think it's worth all of us taking a step back and rethinking how we're building.

Hope you like the read, see you on the next blog!